Dimitria Electra Gatzia (University of Akron, University of Antwerp)

[Jump to Dimitria’s response to invited comments]

Abstract: Cognition can influence action. Your belief that it is raining outside, for example, may cause you to reach for the umbrella. Perception can also influence cognition. Seeing that no raindrops are falling, for example, may cause you to think that you don’t need to reach for an umbrella. The question that has fascinated philosophers and cognitive scientists for the past few decades, however, is whether cognition can influence perception. Can, for example, your desire for a rainy day cause you to see, hear, or feel raindrops when you walk outside? More generally, can our cognitive states (such as beliefs, desires or intentions) influence the way we see the external world? In the first part of this paper, I present evidence of top-down modulation in early vision. In the second part of the paper, I make a distinction between two types of top-down modulation. The first pertains to the unconscious visual ‘inferences’ the visual system makes as it ‘chooses’ among many possible representations to arrive at one that we experience as a conscious precept (or back-end effects). The second pertains to the cognitive states of perceivers, which may be used to alter the function the visual system computes (or front-end effects). I use this distinction to argue that evidence for top-down modulation in early vision need not threaten the Cognitive Penetrability Thesis (CIT). Colour vision is used as a case study to show how empirical findings suggesting that colour experience is cognitively penetrated can be better explained without reference to cognitive penetration.

1. Introduction

Cognition can influence action. Your belief that it is raining outside, for example, may cause you to reach for an umbrella. Perception can also influence cognition. Seeing that no raindrops are falling, for example, may cause you to think that you don’t need to reach for an umbrella. The question that has fascinated philosophers and cognitive scientists for the past few decades, however, is whether cognition can influence perception. Can, for example, your desire for a rainy day cause you to see, hear, or feel raindrops when you walk outside? More generally, can our cognitive states (such as beliefs, desires, or intentions) influence the way we see the external world? The goal of this paper is to investigate this question.

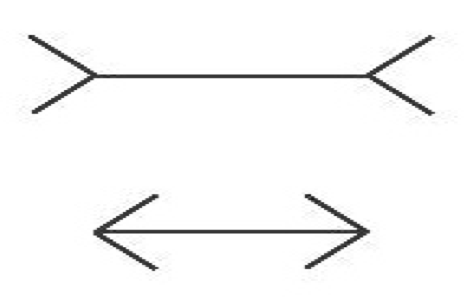

Cognitive penetration (CP) refers to the influence cognitive states, e.g., beliefs, desires, or intentions, have on perceptual experience. In the twentieth century, the possibility of cognitive penetrability in perception was the core tenet behind the New Look movement in psychology, which studied several alleged cases, albeit without appeal to a precise notion of cognitive penetration (Brunner and Goodman, 1947; Brunner and Postman, 1949). In the late eighties, stern criticism from philosophers and psychologists, who were primarily concerned with the characterization of a reliable visual system capable of representing the world accurately, led most to reject the claim that perception is cognitively penetrated (Fodor, 1983; Pylyshyn, 1984; Pylyshyn, 1999). By the late nineties, the Cognitive Impenetrability Thesis (CIT), according to which cognitive states do not influence the content (what the experience is about) or qualitative character (what the experience is like) of experience, had become the dominant view in philosophy. The Müller-Lyer illusion (see Fig. 1) has since served as the quintessential example by proponents of the CIT, as it seems nicely illustrates that acquiring the belief that the lines have the same length cannot influence the perceptual content or qualitative character of one’s experience; one line continues to look shorter than the other even after one learns that they have the same length.

The current has once again changed. Recently, philosophers have used empirical studies to challenge the CIT (Macpherson, 2012; Siegel, 2012; Wu, 2013; Cecchi, 2014; Wu, in press). The debate about the possibility of cognitive penetrability in perception has primarily focused on early vision. Generally, proponents of the CIT allow the top-down modulation of late vision (which consists of high-level processes) but argue that early vision (which is restricted to low-level processes) is impervious to cognitive influences.

In what follows, I begin with the context in which to understand the debate about the CIT (section 2) and show that early vision is subject to top-down modulation (section 3). I argue that a distinction should be made between mere top-down modulation and cognitive penetration. While the former refers to the unconscious visual ‘inferences’ the visual system makes as it ‘chooses’ among many possible representations to arrive at one that we experience as a conscious precept, the latter refers to the purported influences the cognitive states of perceivers, e.g., their beliefs, desires, or intentions, have on their visual experiences (section 3). I use this distinction to argue that evidence for top-down modulation (even in early vision) need not threaten the CIT (section 4). Lastly, I use this distinction to show that empirical findings that have been used to argue that colour experience is cognitively penetrated can better be explained without reference to cognitive penetration (section 5).

2. Visual Perception: top-down vs. bottom-up modulation

Vision is not as straightforward as it appears to the observer. The only available information to the visual system is a given retinal image, which does not provide accurate or complete information about a given external or distal stimulus. Indeed, the mapping from retinal image to distal stimulus is not one-to-one, but rather one-to-many, meaning that identical distal stimuli (under different conditions of illumination or at different distances and orientations from a perceiver, and so on) can give rise to radically different retinal images while radically different distal stimuli can generate the same retinal image. Despite this, the visual system is somehow (we do not yet know the precise mechanisms) able to recover useful information about the environment (Brainard, 2009; Purves and Lotto, 2003).

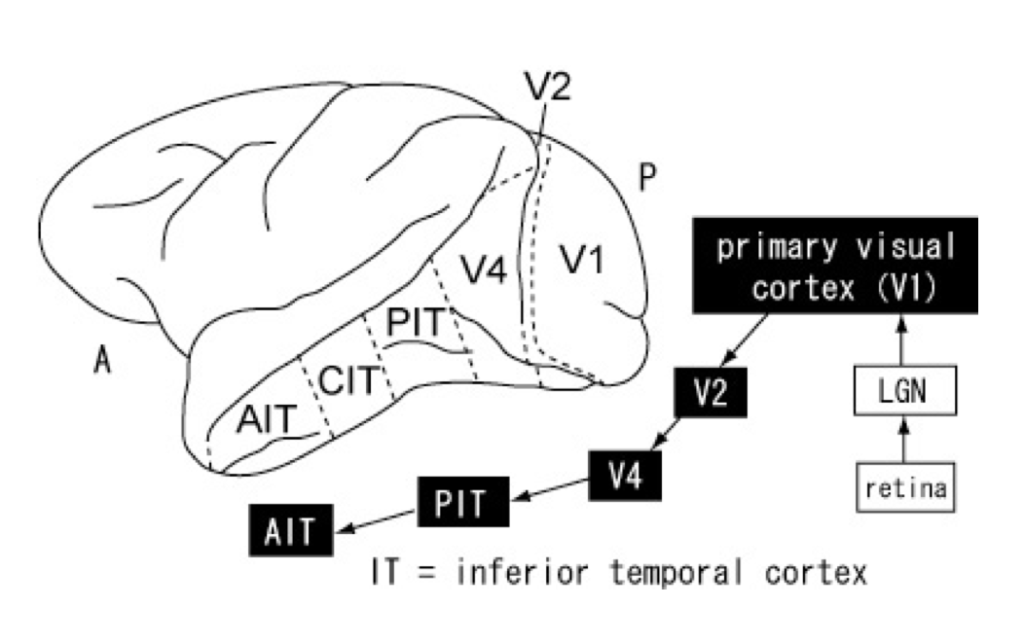

According to the dominant view in cognitive science, visual information is processed sequentially in a feedforward (or bottom-up) manner: visual inputs from subcortical areas such as the retina and the lateral geniculate nucleus (LGN) are transmitted along the ventral pathway[1] to the primary or striate (V1), pre-striate (V2), and V4 (which shows attentional modulation) cortices before reaching, first, the middle temporal cortex (MT or V5), and, then, the inferior temporal cortex (IT), which consists of the posterior (PIT), central (CIT), and anterior (AIT) cortices (see Fig. 2) (Yasuda et al., 2010).[2]

The dominance of the hierarchical (bottom-up) model of visual perception has led to the demarcation of some cortical areas (such as V4 and IT) as ‘higher-level’ to indicate that they involve steps at the top of the processing hierarchy and other cortical as well as subcortical areas (such as V1 and LNG respectively) as ‘lower-level’ to indicate that they involve steps at the bottom of the processing hierarchy. “Bottom-up” describes the direction of information processing: information is processed hierarchically from low-level subcortical and cortical areas to higher-level cortical areas. “Top-down” describes the opposite direction information is processed from higher-level cortical areas to lower-level cortical and subcortical areas.

The question of whether information is processed in a bottom-up or top-down fashion is relevant to questions about the role of visual perception. For example, the view that information is processed in a bottom-up fashion indicates that the visual system is data-driven. This suggests that the role of visual perception is to form (for the most part) accurate representations of the external world (Marr, 1983). The view that information is processed in a top-down fashion, on the other hand, suggests that the visual system is cognition-driven. On this view, far from merely reconstructing visual scenes, the visual system constructs a “hypothesize reality” (Palmer, 1999).[3] This suggests that the role of visual perception is to provide information that is essential in successful behavior.[4]

The debate about the CIT can be placed within the debate about the role of visual perception. Those who adopt the bottom-up model of visual perception tend to deny that visual perception is top-down modulated and embrace the CIT. Those who adopt the top-down model postulate that visual perception is top-down modulated and reject the CIT. In a recent paper Marchi and Newen (2015) argue that experiences of facial expressions are cognitively penetrated by higher-level cognitive states. To support this claim, they present a study by Carroll and Russell (1996) on the relevance of context in the recognition of facial expressions. Subjects were asked to evaluate the photograph of a woman’s face. In the absence of a context, subjects evaluated the face as expressing fear. However, when they were given a context—when subjects were told that a woman who had made reservations for her and her sister at an expensive restaurant is made to wait for over an hour while the maître repeatedly gave available tables to other patrons—they evaluated the same face as expressing anger. After defining cognitive penetration in terms of the presence of an internal and mental causal dependency between a perceptual experience and a cognitive state (Stokes, 2013: 650; for a detailed definition see section 4), Marchi and Newen argue that the best explanation for these results is that subjects had two different experiences of the same face, not that subjects attributed different emotions to the same face by forming two different perceptual judgments. They conclude that the subjects’ perceptual experiences are penetrated by higher-level cognitive states. However, there is another explanation (in addition to the two the authors provide) that Marchi and Newen do not consider, which seems to provide an even better explanation for the results of this study.

Firestone and Scholl (in press) have recently argued that studies attempting to show that visual experience is cognitively penetrated fall prey to various pitfalls. One of these pitfalls is failure to distinguish between response variations resulting from differences in perception and those resulting from differences in judgments. It is not always clear whether it our cognitive states that affect our visual experience or merely the judgments we make on the basis of non-experiential information. Marchi and Newen (2015) take great pains to show that it is not the case that subjects in the above study attribute two different emotions to the same face by forming two different perceptual judgments. However, they neglect to consider another explanation associated with another of the pitfalls discussed by Firestone and Scholl, viz. “memory and recognition.” Specifically, the authors distinguish between two types of effects related to recognitional tasks: ‘front-end’ and ‘back-end’. A front-end effect on visual processing is distinct from the role high-level cognitive states such as memory plays on object recognition. As such it would support the claim that cognitive penetration occurs since such an effect would indicate a top-down modulation effect in perception. A back-end effect on memory access such as activating the relevant representations in memory when a stimulus is present (or even before a stimulus is presented as when subjects who are hungry were found to be better at recognizing hunger words since they are related to what they are already thinking about) would not. Carroll and Russell’s study seems to be a case of back-end effect on memory access: the context activates the relevant representations in memory when a stimulus (in this case the picture of the face) is presented and the subjects respond that they see the face as expressing anger. In the absence of the context, the relevant representations in memory are not activated and the subjects respond that they see the same face as expressing fear. Marchi and Newen do not consider this possibility. And since their argument is an inference to the best explanation, they fail to show that this is a case of cognitive penetration.

This case illustrates that the occurrence of top-down modulation alone is insufficient to establish the occurrence of cognitive penetration. Indeed, “normal” perception is contingent on strong top-down modulation. For example, several studies indicate that schizophrenic subjects are less susceptible than controls to three-dimensional depth inversion illusions such as the hollow-mask illusion, which occur when concave objects appear convex, than healthy controls (Schneider et al., 1996; Schneider et al., 2002; Koethe et al., 2006; Dima et al., 2009; Keane et al., 2013). Using functional magnetic resonance imaging (fMRI), Dima and colleagues (2009) found that poor top-down modulation of the fronto-parietal network in schizophrenic subjects accounted for their diminished susceptibility to the hollow-mask illusion. The fact that schizophrenic subjects exhibit such perceptual abnormalities indicates that “normal” vision depends on strong top-down modulation; for, although poorer top-down modulation would give us an advantage in terms of reduced susceptibility to illusions, it would also decrease our ability to effectively interact with our environment. Indeed, studies show that subjects who recovered from prolonged blindness are also less susceptible to depth illusions (Kurson, 2007; Gregory 2004, 2009). This advantage, however, comes at a high cost: they are also unable to recognize familiar objects when they see them (possibly because their visual system is unable to activate the relevant representations in memory when a stimulus is present).[5] What is needed is an argument that top-down modulation involves the perceiver’s cognitive states (as opposed to high-level information processing systems of the visual system). For example, it requires showing that there is a front-end effect on visual perception itself. This point has been lost in the recent debate about cognitive penetration. Even proponents of the CIT who acknowledge the occurrence of top-down modulation of visual perception have devoted their resources to establishing that “early vision” is not subject to top-down modulation. In what follows, I briefly show that even early vision is subject to top-down modulation and argue that there is no need for concern because the presence of top-down modulation on early vision need not threaten the CIT.

3. Top-down modulation in early vision

Theorists who frame the issue of the possibility of cognitive penetrability in terms of top-down modulation, by end large, do not deny the presence of top-down modulation in late vision. However, they argue that these findings do not threaten the CIT because top-down modulation does not extend to early vision (Pylyshyn, 1999; Raftopoulos, 2001). Marr (1976) was the first to use the term “early vision” to refer to early stages of visual cortical processing. Although he initially defined “early vision” functionally, as the first level of visual processing, for which he coined the term primal sketch, he later identified the primary visual cortex (V1) as the neuroanatomical area in which the primal sketch is constructed (see Fig. 2).[6] Unlike Marr, Pylyshyn (1999: 344) is reluctant to locate early vision neuroanatomically noting that its neuroanatomical locus is not yet known with any precision. He too defines “early vision” functionally, but in terms of its psychophysical properties, which include “a mapping of various substages involved in computing stereo, motion, size, and lightness constancies, as well as the role of attention and learning” (Pylyshyn, 1999: 344). Raftopoulos (2009: Ch. 2) also defines “early vision” functionally, but unlike Pylyshyn, in terms of response latency: as the process lasting no more than 100-120 milliseconds (ms) post-stimulus onset; it is a pre-attentional stage, which includes lateral and recurrent processes devoid of cognitive signals.[7]

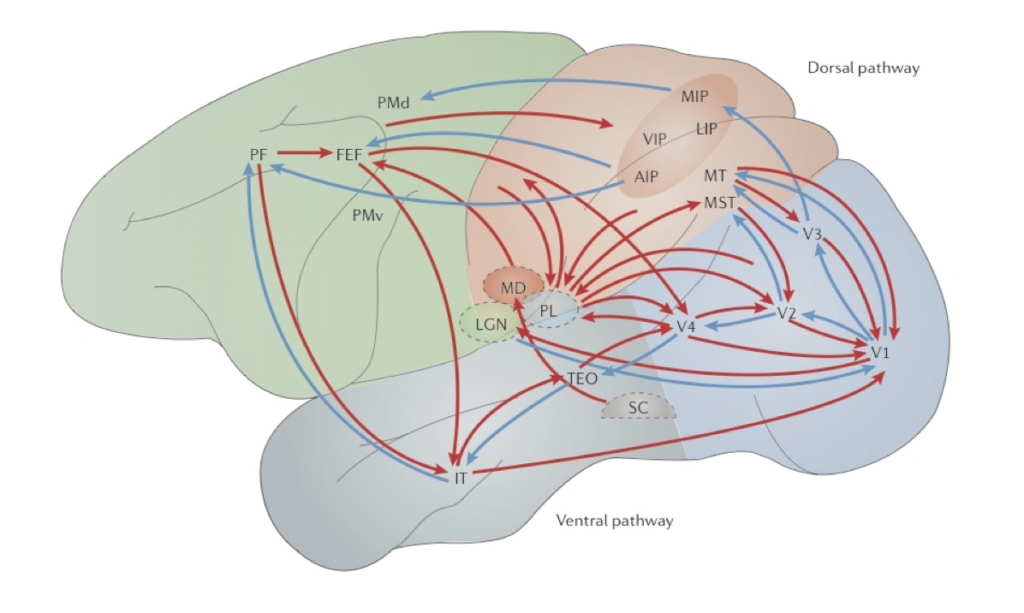

Marr saw the primary visual cortex (V1) as a low-level processing module where local features such as oriented edges or bars from the proximal image are extracted before being transmitted to higher-level visual areas for further processing (Marr, 1982; see also Hubel and Wiesel, 1978). Although Marr (1976) acknowledged that not all neural computations are feedforward (or bottom-up), he maintained that early visual stages are completed independently of later stages.[8] However, contrary to Marr, empirical findings suggest that V1 (a low-level cortical area) plays a central role in integrating and coordinating computations amongst the higher-level visual areas utilizing the recurrent network connections in the visual system (Deco and Lee, 2004; Mumford, 1996; Lee et al., 1998). For example, studies show that higher-level computations that involve high-resolution details such as fine geometry and special precision take place in V1 and are reflected in its neural activities that encode orientation and spatial information (Lee et al., 1998; Deco and Lee, 2004; Gilbert and Li, 2013.). In addition, empirical data suggests that low-level computations cannot be completed prior to the commencement of high-level computations (Lee et al., 1998). For example, figure-ground separation (associated with low-level computations) and object recognition (associated with high-level computations) are interwoven and cannot progress in a simple feedforward fashion, but have to occur concurrently and interactively in constant feedforward and feedback loops that involve the entire circuit in the visual system (Pollen, 1999; Rao et al., 1999; Lee et al., 1998; Dayan et al., 1995). Top-down modulation is, in fact, seen at all stages of visual processing, including subcortical areas such as the LGN (O’Connor, et al., 2002; McAlonan et al., 2008). The source of top-down influences can be ubiquitous either in the form of direct connections from different cortical areas or as a cascade of inputs originating from different areas (Gilbert and Li, 2013; see Fig. 3).

Pylyshyn (1999) acknowledges that complex computations are carried out in early vision. For example, he maintains that cases of perceptual filling-in that appear to involve top-down processing suggest that the “interpretation of parts of a stimulus may depend on the joint (or even prior) interpretation of other parts of the stimulus, resulting in global-to-local influences such as those studied by Gestalt psychologists,” indicating that early vision may embody some local vision-specific memory (Pylyshyn, 1999: 344). However, he argues that that vision is influenced by cognition at only two loci: (a) in the allocation of attention to certain locations or certain properties prior to the operation of early vision; and (b) in the decisions involved in recognizing and identifying patterns after the operation of early vision (Pylyshyn, 1999: 344). In other words, top-down modulation occurs but only pre- or post-perceptually. Pylyshyn uses this distinction to argue that many apparent examples of cognitive penetration arise either from pre-perceptual (attention-allocation) processes or post-perceptual (decision) processes. Since the outcome of visual perception involves either pre-perceptual or post-perceptual processes, Pylyshyn denies that the aforementioned cases (e.g., cases of perceptual filling-in) count as cases of cognitive penetration. However, empirical studies suggest that the interaction of information from the ventral and dorsal pathways can occur in early vision and that the recurrent interaction between higher-level cortical areas and early visual areas such as V1 and V2 seem to play an important role in mediating visual search and attentional routing (Deco and Lee, 2004). Of course, since Pylyshyn does not locate early vision neuroanatomically, it is not clear whether, or to what extend, these findings threaten his claim that the outcome of visual perception involves either pre-perceptual or post-perceptual processes. Nevertheless, there are reasons for doubting the view of early vision as a pre-attentional stage. For example, studies show that the binding of features such as colour, shape, texture, associated with distinct sense modalities can occur in just 40-60 ms (Schoenfeld et al., 2003). Since attention (including object-based selection and, perhaps, attention to locations) plays a crucial role in feature binding (Worden and Foxe, 2003), these findings suggest that early vision is not a pre-attentional stage.

Raftopoulos (2011 and 2009: Ch. 2) also allows for the possibility of top-down modulation of late vision. He too, however, maintains that early vision, which he defines as the processing stage that lasts no more than 100-120 ms post-stimulus onset, is impervious to top-down modulation.[9] Nevertheless, studies show that the transmission rates through the visual cortex are remarkably rapid. Virtually the entire visual system (including higher-level cortical areas such as the IT) becomes activated within 30 ms of the initial afferent input to area V1 (Schroeder et al., 1998). These studies indicate that 100-120 ms is actually a remarkably long time in terms of neural transmission, and that many regions of the cortex can be activated in half that rate (i.e., within 50 ms post-stimulus onset). In fact, studies on humans indicate that even regions of the frontal cortices (associated with cognitive functions such as thinking, problem-solving, and emotions) are activated by visual stimuli within 30-40 ms of initial V1 activation, at a latency of only 70–90 ms post-stimulus onset (Foxe and Simpson, 2002). Robust contextual modulation was also found when disparity, colour, luminance, and orientation cues variously defined a textured figure centered on the receptive field of neurons in area V1 with a characteristic latency of 80-100 ms after stimulus onset, suggesting feedback influences from extrastriate areas (Zipser et al., 1996). These findings suggest that feedback processes impact the ongoing visual processing (Foxe and Simpson, 2002) and, therefore, challenge Raftopoulos’ claim that during the first 100-120 ms post-stimulus onset early vision is impervious to top-down modulation. What all these findings show is that if cognitive penetration is understood in terms of top-down modulation of early vision, it is not difficult to refute the CIT.

4. Top-down modulation vs. cognitive penetration

The Cognitive Penetrability Thesis (CIT) has been traditionally formulated as a semantic thesis, according which the function a system computes is not sensitive (in a semantically-coherent way) to the perceiver’s cognitive states and cannot be altered in a way that bears some logical relation to them (Pylyshyn, 1999: 343; Raftopoulos, 2001; Brogaard and Gatzia, 2015).[10] Semantic coherence refers to a rough correspondence between the content of the perceiver’s cognitive states and the content of her visual experience. For example, suppose that you are not susceptible to the Müller-Lyer illusion (say, because you are suffering from schizophrenia) and as a result you experience the lines as having the same length. All things being equal, your belief (that the lines have the same length) and your visual experience (of the lines as having the same length) are semantically coherent—that is, they have roughly the same content. Now suppose that you are susceptible to the Müller-Lyer illusion.[11] All things being equal, your belief that the lines are the same length differs from your visual experience since you continue to experience one line as being longer than the other even after you learn that they have the same length. Your belief (that the lines have the same length) and your visual experience (of the lines as having varying lengths) are thus said to be semantically incoherent—that is, they do not have the same content.

What is most relevant in the debate about CP understood as a semantic thesis is that your belief (that the lines have the same length) cannot alter the function your visual system computes in a way that it can alter the output (i.e., your visual experience). That explains why you continue to experience the lines as having varying lengths even after you acquire the belief that they have the same length. In order for your belief (that the lines are the same length) would have to alter the function of your visual system in such as way as alter the output (you visually experience the lines as having the same length). However, that does not happen. It follows that acquiring the belief that the lines are of the same lengths is insufficient for altering a visual experience. Showing that cognitive penetration occurs, therefore, requires showing that the changes the visual system undergoes are somehow tied to the perceiver’s cognitive states (in a semantically-coherent way). This distinction is often lost in the literature. For example, Stokes (2013: 650) defines CP as follows:

(CP) A perceptual experience E is cognitively penetrated if and only if (1) E is causally dependent on some cognitive state C and (2) the causal link between E and C is internal and mental.

This definition fails to distinguish between cases of top-down modulation described above associated with back-end effects and cases of cognitive penetration (associated with front-end effects) since all that it requires is that the causal link between the experience and the cognitive state is internal (i.e., in the head). Indeed, as I mentioned earlier, this is the definition that Marchi and Newen (2015) use to arrive to the conclusion that experiences of facial expressions are cognitively penetrated by higher-level states. This definition suggests that rejecting the CIT is a matter of pointing to an internal causal link between a perceptual experience and a higher-level state. Although Marchi and Newen show that there is an internal causal link between a perceptual experience and higher-level state (i.e., a back-end effect on memory access), they fail to show that this is a case of cognitive penetration. A better definition is thus needed. Formulating CP as a semantic thesis (see above) allows us to distinguish between top-down modulation (typically, associated with the operations within the visual system, whose functions cannot be altered by the perceiver’s cognitive states such as beliefs) and cognitive penetration (which requires that the function the visual system computes is altered by the perceiver’s cognitive states, i.e., her beliefs, desires, or intentions).

The presence of top-down modulation merely shows that vision “can have a independent existence with extraordinarily sophisticated inferences that are totally separate from standard, everyday, reportable cognition” (Cavanagh, 2011: 1540). For example, empirical studies indicate that the knowledge of colour-luminance relationships between the aligned chromatic and luminance variations is build into the machinery of the human visual system (see Kingdom, 2003).[12] This ‘knowledge,’ however, has an independent existence (i.e., it is not accessible to the perceiver) with extraordinarily sophisticated ‘inferences’ (i.e., computations used by the visual system). Evidence for top-down modulation seem to support the claim that vision has its own ‘inference’ mechanisms which cannot be altered by the perceiver’s cognitive states. In other words, they support the claim that vision is not a purely bottom-up process. However, they do not support the claim that a perceiver’s cognitive states can penetrate her visual experience. As Cavanagh (2011: 1539) notes,

Saying that the components of high-level vision are the contents of our visual awareness does not mean that these mental states are computed consciously. It only means that the end point, the product of a whole lot of pre-conscious visual computation is an awareness of the object or intention or connectedness. In fact, what interests us here is specifically the unconscious computation underlying these products, and not the further goal-related activities that are based on them… We are interested in the rapid, unconscious visual processes that choose among many possible representations to come up the one that we experience as a conscious percept. Attention and awareness may limit how much unconscious inference we can manage and what it will be focused on but it is the unconscious decision processes that are the wheelhouse of visual cognition.

The visual system transforms sensory inputs into “solid visual experiences with little or no evidence of the inferences that underlie it” (Cavanagh, 2011: 1539). The occurrence of perceptual errors (e.g., the Müller-Lyer) may alert us of the existence of these operations that underlie the visual system but cannot grant us access to them (see, for example, section 5). A distinction, therefore, must be made between the ‘inferences’ the visual system makes as it ‘chooses’ among many possible representations to arrive at one that we experience as a conscious precept, which are not consciously accessible to the perceiver, on the one hand, and the cognitive states of perceivers (i.e., their beliefs, desires, intentions, which are consciously accessible to the them) which may be able to alter the function their visual system computes, on the other. This distinction is implicit in Fodor’s (1988: 194) response to Churchland (even though it relates to the question of whether the brain’s plasticity should be viewed as a case of diachronic cognitive penetration):

What Churchland needs to show—and doesn’t—is that you also find perceptual plasticity where you wouldn’t expect it on specific ecological grounds; for example, that you can somehow reshape the perceptual field by learning physics.

Fodor’s point here is that changes to visual perception that result from the recalibration of the perceptual/motor mechanisms (such as hand/eye) that correlate bodily gestures with perceived spatial positions and which are required for an organism to grow do not count as cases of cognitive penetration because they are adaptational or developmental. As such they are not brought about by the perceivers’ beliefs, desires, or intentions—indeed, they could not have been brought about by learning physics; they are associated with adaptations of the visual system, which result from changes to the environment. To see how this criticism applies to the issue of cognitive penetration let us take a look at purported counterexamples to the CIT.

5. The effects of colour constancy on colour perception

Colour experience is an interesting case for two reasons. The first is that it has been traditionally considered to be cognitively impenetrable, even by those who reject the CIT. The second is that empirical studies have been recently used to challenge the CIT (Delk and Delk and Fillenbaum, 1965; Hansel et al., 2006; Olkkonen et al., 2008).[13] I discuss two such studies (an older and a newer one) and argue that once the above distinction between mere top-down modulation and cognitive penetration is made they lose their force.

In an older study, Delk and Fillenbaum (1965) cut 10 figures out of the same cardboard that had a orange-red colour approximating Muncell chip R/5/12. Some of the figures represented objects that have characteristically red colours (such as apples or a pair of lips) while others represented neutral figures (such as mushrooms or geometric shapes). Subjects were asked to match the colour of each figure, shown to them separately, with a background colour using a differential colour-mixer, which allowed the mixture of two colours. These two colours could be varied from a red shade approximating Munsell chip R/3/8 to a yellow-orange shade approximating Munsell chip YR/6/10—to produce a continuously varying intermediate shade (Delk and Fillenbaum, 1965: 291). Both the figure and the background were illuminated by a fluorescent lamp, which provided a soft-illumination of both figure and ground and cast no shadows on either. They found that subjects adjusted the background colour to a red hue when matching it with figures representing characteristically red objects (such as pair of lips or apple) but to a reddish-orange hue when matching it with figures representing neutral objects (i.e., objects that are not characteristically red such as mushrooms or geometric shapes).

In a more recent study, Hansen and colleagues (2006) presented subjects with either digitized photographs of natural fruit (such as bananas) or random patches (such as geometric shapes) each placed against a gray background. They asked subjects to adjust the colour of the digitized photograph (either a fruit or a random patch) until it appeared gray. They found that subjects adjusted the colour of the banana, but not the random noise patches, to a slightly bluish hue (the opposite of yellow).

Macpherson (2012) argues that Delk and Fillenbaum’s study provides a counterexample to the CIT. Like Marchi and Newen (2015), Macpherson argues that the best explanation for these results is that subjects experienced the cutouts of characteristically red objects as being redder than the controls, not that subjects formed two different perceptual judgments. She thus concludes that the subjects’ perceptual experiences were penetrated by their beliefs about the colours of familiar objects. A similar argument could be made about the study by Hansen and colleagues: subjects experienced the banana as being less gray than the controls, not that subjects formed two different perceptual judgments. Although it is tempting to view these studies as evidence against the CIT, their results are best explained by reference to colour constancy mechanisms, whose computations cannot be altered by the perceiver’s cognitive states.[14]

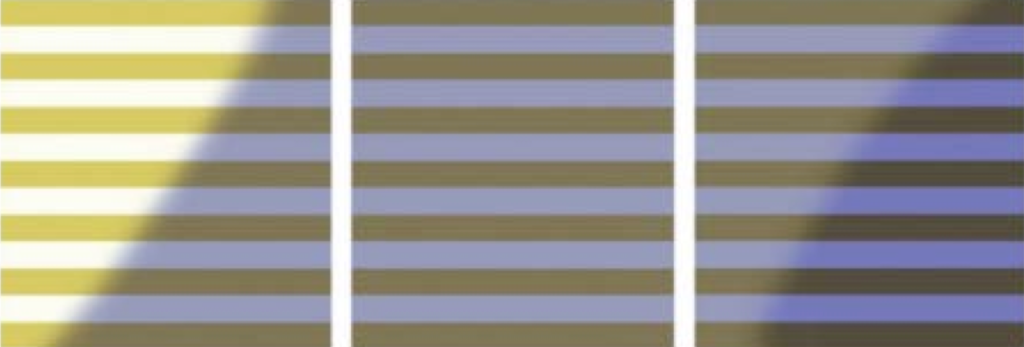

There are two types of colour constancy: simultaneous and successive (Ling and Hurlbert, 2007).[15] Simultaneous colour constancy occurs when perceivers experience identical surface materials in a scene to have the same colour, even though they are illuminated differentially. For example, if a part of a single surface is directly lit while the other part is in shadow (that is, lit only via the ambient illumination) the entire surface would look to have the same colour. The visual system ‘discounts’ the spatial change in illumination and recovers a constant surface reflectance across the shadow border. A recent failure in successive constancy involving such an effect recently shocked the public (and prompted a plethora of news articles from respectable news outlets) when a photograph of a blue-black dress was posted online (see Fig. 9). What was peculiar about this digitized photograph is that while the dress looked blue and black to some, it looked white and gold to others.

This peculiarity can be explained in terms of simultaneous colour constancy. One part of the dress was directly lit while the other part was in shadow, and lit only via the ambient illumination. As a result, it conferred inconsistent cues to the visual systems of different perceivers. In order to resolve ambiguities arising from contradictory inputs, the visual system discounts the spatial change in illumination and recovers a constant surface reflectance across the shadow border. So although the entire dress looks to have constant colours, some perceivers experience it as blue-black while others as white-gold. When the visual system interprets the scene as being illuminated by a blue light (which is the illumination the visual system typically encounters in natural daylight), the dress looks white-gold (because it discounted the blue light, see image A in Fig. 5). But when the visual system interpreted the scene as being lit by a yellowish light (which is the illumination the visual system encounters in the presence of a shadow), the dress looks blue-black (see image C in Fig. 5). In either case, perceivers would be unable to alter the colour constancy computations their visual systems performed (and which resulted in radically different experiences) even if they were aware of them. Indeed not only they were surprised to find out that the dress looked to have different colours to other people, but also this realization had absolutely no effect on their visual experiences: their colour experiences remained unchanged.

Successive colour constancy occurs when we see the same object as having the same colour under different illuminations. Although the colour of the object undergoes (in many cases radical) changes under different illuminations (see, for examples, the series of paintings by Monet), successive colour constancy mechanisms ensure that (for the most part) these changes go unnoticed. The results from Delk and Fillenbaum’s (1965) study can be explained in terms of successive colour constancy.[16] Recall that subjects adjusted the background to a redder hue when matched it to the characteristically red figures but to a reddish-orange hue when matched it to the controls. This seems to be a case of a back-end effect on memory access where the relevant representations in memory are activated when a stimulus is present. In this case, the relevant representations in memory may have been activated when the characteristically red stimuli were presented (in this case the apple or the pair of lips) but not when the controls were present. This would explain why subjects adjusted the background to a red hue but not the controls: the relevant representations in memory are only activated for the characteristically red objects but not the controls making the visual system prone to error. However, these results do not support the claim that cognitive penetration occurred since these subjects would be unable to alter computations they have no conscious awareness of in such a way as to alter their visual experience. So although the above explanation makes reference to top-down modulation (i.e., to back-end effects), they do not provide a good reason for rejecting the CIT.

The same can be said about the study by Hansen and colleagues (2006). In this case, subjects adjusted the colour of the banana (but not the random patches) to a slightly a bluish hue—the opposite of yellow—in attempting to make it appear gray. Accordingly, subjects adjusted the banana to a slightly bluish hue (the opposite of yellow) but not the random patches. This seems to be a case of a back-end effect on memory access where the relevant representations in memory are activated when a stimulus is present. In this case, the relevant representations in memory may have been activated when the characteristically yellow stimuli were presented (in this case the banana) but not when the random patches were present. This would explain why subjects adjusted the banana to a slightly bluish hue but not the random patches: the relevant representations in memory are only activated for the characteristically yellow objects but not the random patches making the visual system prone to error. Certainly, Hansen et al. (2006: 1368) seem to find this explanation plausible:

Another question posed by our results is whether they are applicable to the type of lighting conditions occurring naturally. Notably, natural illumination during the course of the day varies mostly along the blue-yellow dimension. Natural daylight contains a high proportion of short-wavelength energy in the morning and gradually shifts toward energy of longer wavelengths during the course of the day. Most fruit objects are yellowish rather than bluish; therefore, they might appear grayish in the morning, as color constancy is known to be imperfect even in realistic situations. The appearance shifts described here may contribute toward color constancy under these conditions (emphasis added).

However, these results do not support the claim that cognitive penetration occurred since these subjects would be unable to alter computations they have no conscious awareness of in such a way as to alter their visual experience. So although the above explanation makes reference to top-down modulation (i.e., to back-end effects), they do not provide a good reason for rejecting the CIT. This conclusion is independently supported by the fact that although colour constancy plays an important role in object recognition (e.g., when the visual system retrieves relevant memory representations when a stimulus is present), colour constancy computations are not obligatorily linked to experiencing objects and may precede the experiencing of objects (Kentridge et al., 2014). Given that these mechanisms are not obligatorily linked to experiencing objects and may precede the experiencing of objects, any changes to the function a visual system computes, which may alter one’s colour experience, cannot be attributed to the perceiver’s beliefs, desires, or intentions. It follows that the findings of the aforementioned studies cannot be used as evidence against the CIT.

6. Conclusion

In section 2, I provided the context in which to understand the debate about the CIT. In section 3, I showed that early vision is subject to top-down modulation. In section 4, I argued that once we distinguish between top-down modulation resulting from the computations performed by the visual system (i.e., back-end effects), which cannot be altered by the cognitive states of perceivers since they are not consciously accessible to them and cognitive penetration (i.e., front-end effects), it becomes clear that that evidence for top-down modulation (even in early vision) need not threaten the CIT. In the last section (5), I used this distinction to show that empirical findings that have been used to argue that colour experience is cognitively penetrated can better be explained by reference to back-end effects, specifically, memory and recognition (section 5).

References

Arnsten, A. (2009). Stress signaling pathways that impair prefrontal cortex structure and function. National Review of Neuroscience 10(6): 410-422.

Bartleson, C. J. (1960) Memory Colors of Familiar Objects. Journal of the Optical Society of America 50(1): 73-77.

Blumenfeld, R. S., Ranganath, C. (2007). Prefrontal cortex and long-term memory encoding: an integrative review of findings from neuropsychology and neuroimaging. The Neuroscientist 13(3): 280-91.

Borenstein, E., Ullman, S. (2008). Combined top-down/bottom-up segmentation. IEEE Transactions on Pattern Analysis and Machine Intelligence 30(12): 2109-2125.

Brogaard, B. and Gatzia, D. E. (In press) Color and Cognitive Penetrability. Topics in Cognitive Science: Special Issue on Cortical Color.

Bruce, V. and Burton, M. (2002). Learning to recognize faces. In M. Fahle and Poggio T. (Eds.) Perceptual Learning, pp. 317-334. Cambridge (MA): MIT Press.

Brunner, J. S. and Goodman, C. C. (1947) Value and need as organizing factors of perception. J. Exp. Psychol. Hum. Percept. Perform. 25: 1076-96

Brunner, J. S. and Postman, L. (1949) On the perception of incongruity: a paradigm. J. Pers. 18: 206-203.

Bruner, J. S., Postman, L., Rodrigues, J. (1951). Expectation and the Perception of Color. The American Journal of Psychology, 64(2): 216-227.

Bullinaria, John, A. (2007). Understanding the Emergence of Modularity in Neural Systems. Cognitive Science 31: 673-695.

Canavagh, P. (2011). Visual Cognition. Vision Research 51: 1538-1551.

Carruthers, P. (2006). The Architecture of the Mind. Oxford: Oxford University Press.

Cauller, L. (1995). Layer I of primary sensory neocortex: where top-down converges upon bottom-up. Behavioral and Brain Research 71: 163–170.

Cecchi, A. S. (2014). Cognitive penetration, perceptual learning, and neural plasticity. Dialectica 68(1): 63-95.

Churchland, P. M. (1988) Perceptual Plasticity and Theoretical Neutrality: A Reply to Jerry Fodor, Philosophy of Science 55: 167–187.

Courtney, S. M. Petit, L. Haxby, J. V. and Ungerleider, L. G. (1998). The role of prefrontal cortex in working memory: examining the contents of consciousness. Philosophical Transactions of the Royal Society of London B: Biological Sciences 353 (1377): 1819–1828.

Crist, R. E., Li, W. & Gilbert, C. D. (2001). Learning to see: experience and attention in primary visual cortex. Nature Neuroscience 4: 519–525.

Dayan, P., Hinton, G. E., Neal, R. M, Zemel, R.S. (1995). The Helmholtz machine. Neural Computation 7 (5): 889–904.

Deco, G. and Rolls, E. T. (2008). Neural Mechanisms of Visual Memory: A Neurocomputational Perspective. In S. J. Luck and A. Hollingworth (eds), Visual Memory. Oxford: Oxford University Press (pp. 247-289).

Deco, G. and Lee, T.S. (2004). The role of early visual cortex in visual integration: a neural model of recurrent interaction. European Journal of Neuroscience 20: 1089-1100.

Delk, J., Fillenbaum, S. (1965). Differences in perceived color as a function of characteristic color. The American Journal of Psychology 78(2): 290–293.

Davidoff, Jules. (1991). Cognition through Color. Cambridge, MA: MIT Press.

DeRenzi, E. and Spinnler, H. (1967). Impaired Performance on Color Tasks in Patients with Hemispheric Lesions. Cortex, 3: 194-217.

Driesen, N.R. Leung, H-C., Calhoun, V.D., Constable, R.T., Gueorguieva, R., Hoffman, R. Skudlarski, P. Goldman-Rakic, P.S. Krystal, J.H. (2008). Impairment of Working Memory Maintenance and Response in Schizophrenia: Functional Magnetic Resonance Imaging Evidence. Biological Psychiatry 64(12): 1026–1034.

Egan, Frances (1999). “Intentionality and the theory of vision.” In Kathleen Akins (Ed.) Perception. Oxford: Oxford University Press, 232-248.

Farah, Martha. J. (1988). “Is Visual Imagery Really Visual? Overlooked Evidence from Neuropsychology”, Psychological Review, 95: 307-317.

Firestone, C. & Scholl, B. J. (in press). Cognition does not affect perception: Evaluating the evidence for ‘top-down’ effects. Behavioral & Brain Sciences. (http://perception.research.yale.edu/preprints/Firestone-Scholl-BBS.pdf)

Frisby, John P. and Stone James V. (2010). Seeing: The Computational Approach to Biological Vision (2nd edition). Cambridge: MIT Press.

Fodor, J. A. (1983). The Modularity of Mind, Cambridge MA: MIT Press.

Fodor, J. A. (2000) The mind doesn’t work that way: The scope and limits of computational psychology. Cambridge, MA: MIT Press.

Foxe, J. J., Simpson, G. V. (2002). Flow of activation from V1 to frontal cortex in humans: A framework for defining “early” visual processing. Experimental Brain Research 142 (1): 139-50.

Fuster, Joaquin M. (2008). The Prefrontal Cortex. Boston: Academic Press.

Hansen, Thorsten, Olkkonen, Maria, Walter, Sebastian, and Gegenfurtner, Karl R. (2006). Memory Modulates Color Appearance. Nature Neuroscience 9(11): 1367-1368.

Hebart M. N. and Hesselmann, G. (2012). What visual information is processed in the human dorsal stream? The Journal of Neuroscience 32(24): 8107-8109.

Heywood, C. A., Kentridge, R. W. and Cowey, A. (2001). Colour & the Cortex: Wavelength Processing in Cortical Achromatopsia. In B. De Gelder, E. De Haan, & C. A. Heywood (eds.) Varieties of Unconscious Processing: New Findings & Models, (pp. 52-68). Oxford: Oxford University Press.

Heywood, C. A. and Kentridge, R. W. (2003). Achromatopsia, colour vision & cortex. Neurological Clinics of North America 21: 483–500.

Hubel, D. H. and Wiesel, T. N. (1978). Functional architecture of macaque monkey visual cortex. Proc. R. Soc. B (Lond.) 198: 1-59.

Gallese, V. The “conscious” dorsal stream: embodied simulation and its role in space and action conscious awareness. Psyche 13(1).

Gegenfurtner, Karl, Witzel, Christoph, Valkova, Hanna, Hansen, Thorsten (2011) Object Knowledge Modulates Colour Appearance, i-Perception 2: 13-49.

Gilbert, C. D. and Li, W. (2013). Top-down influences on visual processing. Nature Reviews: Neuroscience 14: 351.

Gilbert, C. D. and Li, W. (2012). Adult visual cortical plasticity. Neuron 75: 250–264.

Goldman-Rakic, P. S. (1988). Topography of cognition: parallel distributed networks in primate association cortex. Annual Review of Neuroscience 11: 137–56.

Goldstone, R. L., Braithwaite, D. W., & Byrge, L. A. (In press). Perceptual learning. In N. M. Seel (Ed.), Encyclopedia of the Sciences of Learning. Heidelberg, Germany: Springer Verlag.

Gordon, Ian, E. (2004). Theories of Visual Perception. New York: Psychology Press.

Ito, M. and Gilbert, C. D. (1999) Attention modulates contextual influences in the primary visual cortex of alert monkeys. Neuron 22: 593–604.

Graham, D., Meng, M. (2011). Altered spatial frequency content in paintings by artists with schizophrenia. i-perception 2: 1-9.

Gregory, L. Richard. (2009). Seeing Through Illusions. Oxford: Oxford University Press.

Gregory, L. Richard. (2004). The Blind Leading the Sighted: an Eye-opening Experience of the Wonders of Perception. Nature 430: 1-1, 2004.

Gregory, L. Richard and Jean G. Wallace. (2001). Recovery from Early Blindness—A case study, reproduced from Reproduced from Experimental Psychology Society Monograph No. 2 1963: http://www.richardgregory.org/papers/recovery_blind/contents.htm.

Gregory, L. Richard. (1998). Brainy Mind. British Medical Journal 317: 1693-5.

Gregory, L. Richard. (1997). Knowledge in perception and illusion. Philosophical Transactions of the Royal Society, Series B 352: 1121–1128.

Gregory, L. Richard. (1980). Perceptions as hypotheses. Philosophical Transactions of the Royal Society, Series B 290: 183-97.

Gregory, L. Richard. (1970). The Intelligent Eye. London: Weidenfeld & Nicolson.

Kastner, S., De Weerd, P., Desimone, R. and Ungerleider, L. G. (1998). Mechanisms of directed attention in the human extrastriate cortex as revealed by functional MRI. Science 282: 108–111.

Keane, B. P., Silverstein, S. M., Wang, Y., Papathomas, T. V. (2013). Reduced depth inversion illusions in schizophrenia are state-specific and occur for multiple object types and viewing conditions. Journal of Abnormal Psychology 122(2): 506–512.

Kingdom, F. A. A. (2003). Color brings relief to human vision. Nature Neuroscience 6(6): 641-644.

Kentridge, R., Norman, L. Akins, K., Heywood, C. (2014). Colour Constancy Without Consciousness, presented at the Towards a Science of Consciousness Conference, Tucson, April 2014.

Koethe, D., Kranaster, L., Hoyer, C., Gross, S., Neatby, M. A., Schultze-Lutter, F., Ruhrmann, S., Klosterkötter, J., Hellmich, M., Leweke, F. M. (2009). Binocular depth inversion as a paradigm of reduced visual information processing in prodromal state, antipsychotic-naive and treated schizophrenia. European Archives of Psychiatry and Clinical Neuroscience 259(4): 195–202.

Kottenhoff, H. (1957) Situational and personal influences on space perception with experimental spectacles. Part one: prolonged experiments with inverting glasses. Acta Psychologica 13: 79-97.

Kuehni, Rolf, G. (2003). Olive Green or Chestnut Brown? Behavioral and Brain Sciences 26: 35-36.

Kurson, Robert. (2007). Crushing Through: A True Story of Risk, Adventure, and the Man Who Dared to See. New York: Random House.

Lee, Tai Sing (2003). Computations in the early visual cortex. Journal of Physiology (Paris) 97: 121–139

Lee, T. S., Mumford, D., Romero, R., Lamme, V. A. F. (1998). The Role of the Primary Visual Cortex in Higher Level Vision. Vision Research 38: 2429-2454.

Li, W., Piech, V., Gilbert, C. D. (2004). Perceptual learning and top-down influences in primary visual cortex. Nature Neuroscience 7: 651–657.

Li, W., Piech, V., Gilbert, C. D. (2008). Learning to link visual contours. Neuron 57: 442–451.

Li, W., Piech, V. & Gilbert, C. D. (2008b). Learning to link visual contours. Neuron 57, 442–451.

Mamassian, P., Landy, M., and Maloney, L. T. (2002). Bayesian Modeling of Visual Perception, in Rao, R. P. N., Olshausen, B. & Lewicki M. S. (eds) Probabilistic Models of the Brain: Perception and Neural Function. Cambridge (MA): The MIT Press.

Macpherson, Fiona. (2012). Cognitive Penetration of Colour Experience: Rethinking the Issue in Light of an Indirect Mechanism. Philosophy and Phenomenological Research 74(1): 24-62.

Marr, David. (1982) Vision: A computational investigation into the human representation and processing of visual information. San Francisco, CA: Freeman.

Marr, David. (1976) “Early Processing of Visual Information”, Philosophical Transactions of the Royal Society of London, Series B, Biological Sciences, 275(942): 483-519.

McAlonan, K., Cavanaugh, J. and Wurtz, R. H. (2008). Guarding the gateway to cortex with attention in visual thalamus. Nature 456: 391–394.

McManus, J. N., Li, W. and Gilbert, C. D. (2011). Adaptive shape processing in primary visual cortex. Proceedings of the National Academy of Sciences of the USA 108: 9739–9746.

McRae, K., Jones, M. N. (2013). Semantic Memory. In D. Reisberg (ed.) The Oxford Handbook of Cognitive Psychology (pp. 206-219). Oxford: Oxford University Press.

Milner, A. D. and Goodale, M. A. (2006). The visual brain in action. Oxford: Oxford University Press.

Mitterer, H., Horschig, J., M., Müsseler, J., and Majid, A. (2009). The Influence of Memory on Perception: It’s not what things look like, it’s what you call them. Journal of Experimental Psychology: Learning, Memory, and Cognition 35(6): 1557-1562.

Motter, B. C. (1993). Focal attention produces spatially selective processing in visual cortical areas V1, V2, and V4 in the presence of competing stimuli. Journal of Neurophysiology 70: 909–919.

Mumford, D. (1996). Commentary on ‘Banishing the homunculus’ by H. Barlow. In Knill, D.C. & Richards, W. (eds), Perception as Bayesian. Cambridge: Cambridge University Press (pp. 501–504).

O’Connor, D. H., Fukui, M. M., Pinsk, M. A. & Kastner, S. (2002). Attention modulates responses in the human lateral geniculate nucleus. Nature Neuroscience 5: 1203–1209.

Olkkonen, M., Hansen, T., & Gegenfurtner, K. (2008). Color appearance of familiar objects: Effects of object shape, texture, and illumination changes. Journal of Vision 8(5): 1–16.

Pecher, D., Zwaan, R. A. (2005). Grounding cognition: The role of perception and action in memory, language, and thinking. Cambridge: Cambridge University Press.

Pinto, Y., van der Leij, A. R., Sligte, I. G., Lamme, V. A. F., H. Scholte, S. (2013). Bottom-up and top-down attention are independent. Journal of Vision 13(3): (article) 16.

Pinto, Y., Sligte, I. and V. Lamme (2013a). Working memory requires focal attention, fragile VSTM does not. Journal of Vision 13(9): (article) 459.

Pollen, D. A. (1999). On the Neural Correlates of Visual Perception, Cerebral Cortex 9(1): 4-19.

Pourtois, G., Rauss, K. S. Vuilleumier, P., Schwartz, S. (2008). Effects of Perceptual Learning on Primary Visual Cortex Activity in Humans. Vision research 48: 55–62.

Prinz, Jesse J. (2006). Is the Mind Really Modular? In Robert J. Stainton (Ed.) Contemporary Debates in Cognitive Science. Malden: Blackwell Publishing, pp. 22-36.

Purves D. and Lotto, B. R. (2011). Why we see what we do redux. Sunderland (MA): Sinauer Associates, Inc.

Pylyshyn, Zenon (1999). Is Vision Continuous with Cognition? The Case for Cognitive Impenetrability of Visual Perception, Behavioral and Brain Sciences, 22: 341-423.

Pylyshyn, Z. (1984). Computation and Cognition. Cambridge, MA: MIT Press.

Pylyshyn, Zenon (1973). “What the Mind’s Eye Tells the Mind’s Brain: A Critique of Mental Imagery”, Psychological Bulletin, 80(1): 1-24, 1973.

Raftopoulos, A. (2001). Is Perception Informationally Encapsulated? The Issue of the Theory-Ladenness of Perception, Cognitive Science, 25, 423–51.

Raftopoulos, A. (2009). Cognition and Perception: How Do Psychology and Neural Science Inform Philosophy? Cambridge, MA: MIT Press.

Raftopoulos, A. (2011) Late vision: processes and epistemic status. Frontiers in Psychology 2: (article) 382. doi: 10.3389/fpsyg.2011.00382.

Rao, Rajesh, P. N., Ballard, D. H. (1999). Predictive coding in the visual cortex: a functional interpretation of some extra-classical receptive-field effects. Nature Neuroscience 2(1): 79–87.

Rauss, K., Schwartz, S. and Pourtois, G. (2011). Top-Down Effects on Early Visual Processing in Humans: A Predictive Coding Framework. Neuroscience and Biobehavioral Reviews 35: 1237–1253.

Rescorla, M. (2013). Bayesian Perceptual Psychology. In Mohan Matthen (ed.)The Oxford Handbook of the Philosophy of Perception.

Riesenhuber, M., Poggio, T. (1999). Hierarchical models of object recognition in cortex. Nature Neuroscience 2: 1019–1025.

Roelfsema, P. R., van Ooyen, A., and Watanabe, T. (2010). Perceptual learning rules based on reinforcers and attention. Trends in cognitive sciences 14(2), 64–71.

Rock, I. (1983) The logic of perception. Cambridge (MA): IT Press.

Sakuraba, S., Sakai, S., Yamanaka, M., Yokosawa, K., Hirayama, K. (2012). Does the human dorsal stream really process a category for tools? The Journal of Neuroscience 32: 3949–3953.

Schacter, D. L. (1987) Implicit memory: history and current status. Journal of Experimental Psychology: Learning, Memory, and Cognition 13: 501-518.

Schlack, A., Albright, T. D. (2007) Remembering visual motion: neural correlates of associative plasticity and motion recall in cortical area MT. Neuron 53: 881–890.

Schneider, U., Borsutzky, M., Seifert, J., Leweke, F.M., Huber, T.J., Rollnik J.D., Emrich, H.M., (2002). Reduced binocular depth inversion in schizophrenic patients. Schizophrenia Research 53: 101–108.

Schneider, U., Leweke, F.M., Sternemann, U., Weber, M.M., Emrich, H.M., (1996). Visual 3D illusion: a systems-theoretical approach to psychosis. European Archives of Psychiatry and Clinical Neuroscience 246: 256–260.

Schoenfeld, M. A., Tempelmann, C., Martinez, A., Hopf, J. M., Sattler, C., Heinze, H. J. & Hillyard, S. A. (2003). Dynamics of features binding during object-selective attention. Proceedings of the National Academy of Sciences of the USA 100: 11806 –11811.

Schroeder, C. E., Mehta, A. D. & Givre, S. J. (1998). A spatiotemporal profile of visual system activation revealed by current source density analysis in the awake macaque. Cerebral Cortex 8: 575–592.

Schwartz, S. (2007). Functional MRI Evidence for Neural Plasticity at Early Stages of Visual Processing in Humans. In N. Osaka, I. Rentschler, and I. Biederman (eds.) Object Recognition, Attention, and Action, pp. 27–40. Tokyo: Springer.

Schwartz, S., Maquet, P. and Frith, C. (2002). Neural Correlates of Perceptual Learning: A Functional MRI Study of Visual Texture Discrimination. Proceedings of the National Academy of Sciences 99: 17137–17142.

Schwartz, S., Vuilleumier, P. Hutton, C. Maravita, A. Dolan, R. J., Driver, J. (2005). Attentional Load and Sensory Competition in Human Vision: Modulation of fMRI Responses by Load at Fixation During Task-Irrelevant Stimulation in the Peripheral Visual Field. Cerebral cortex 15: 770–786.

Siegel, S. (In Press). Rational Evaluability and Perceptual Farce. In J. Zeimbekis and A. Raftopoulos (eds) Cognitive Effects on Perception: New Philosophical Perspectives.

Siegel, S. (2012). Cognitive Penetrability and Perceptual Justification. Noûs, 46(2): 201-222.

Siegel, S. (2010). The Contents of Visual Experience. New York: Oxford University Press.

Siegel, S. (2005). Which properties are represented in perception? In T. Szabo-Gendler & J. Hawthorne (eds.), Perceptual experience (pp. 481–503). Oxford: Oxford University Press.

Sinha, P. and Poggio, T. (2002). Higher-level learning of early visual tasks. In M. Fahle and Poggio T. (Eds.) Perceptual Learning, pp. 273-298. Cambridge (MA): MIT Press.

Siple, P. and Springer, R. M. (1983). Memory and Preference for the Colors of Objects. Perception and Psychophysics 34(4): 363-370.

Spruit, Leen. (2008). Renaissance Views of Active Perception. In Simo Knuuttila and Pekka Kärkkäinen (eds)Theories of Perception in Medieval and Early Modern Philosophy (pp. 203-224). New York: Springer.

Sterzer, P., Haynes, J. D., Rees, G. (2008). Fine-scale activity patterns in high-level visual areas en- code the category of invisible objects. Journal of Vision 8: 1–12.

Styles, E. A. (2006). The Psychology of Attention. Hove: Psychology Press.

Tomasi C. (2001). Early vision, Encyclopedia of Cognitive Sciences 20. London: Nature Publishing Group, Macmillan Reference Limited.

Tulving, E. (1972). Episodic and semantic memory. In E. Tulving & W. Donaldson (eds), Organization of Memory (pp. 381-403). New York: Academic Press.

Tversky, A., Kahneman, D. (1974). Judgment under uncertainty: Heuristics and biases. Science 185: 1124–1131.

Tye, Michael. (2000). Consciousness, Color, and Content. Cambridge (MA): MIT Press.

Ullman, S. (2007). Object recognition and segmentation by a fragment-based hierarchy. Trends in Cognitive Sciences 11: 58–64.

Vallortigara, G. (1999). Segregation and integration of information among visual modules. Behavioral and Brain Sciences 22(3): 398.

Wallbott, H.G., Ricci-Bitti, P. (1993). Decoders’ processing of emotional facial expression—a top-down or bottom-up mechanism? European Journal of Social Psychology 23: 427–443.

Wallis, G. and Bülthoff, H. (2002). Learning to recognize objects. In M. Fahle and Poggio T. (Eds.) Perceptual Learning, pp. 299-316. Cambridge (MA): MIT Press.

Worden, M. S. and Foxe, J. J. (2003). The dynamics of the spread of selective visual attention. Proceedings of the National Academy of Sciences of the USA 100(21): 11933-11935.

Wu, W. (2013). Visual Spatial Constancy and Modularity: Does Intention Penetrate Vision? Philosophical Studies, 165: 647-669.

Yasuda, M., Banno, T. and Komatsu, H. (2010). Color Selectivity of Neurons in the Posterior Inferior Temporal Cortex of the Macaque Monkey. Cerebral Cortex 20(7): 1630-1646.

Zanto, T. P. and Gazzaley, A. (2013). Fronto-parietal network: flexible hub of cognitive connectivity. Trends in Cognitive Sciences 17(12): 602-603.

Zeimbekis, John. (2013). Color and Cognitive Penetrability. Philosophical Studies 165: 167-175.

Zenger, B. and Sagi, D. (2002). Plasticity of low-level visual networks. In M. Fahle and Poggio T. (Eds.) Perceptual Learning, pp. 177-196. Cambridge (MA): MIT Press.

Zhang, N. R. & von der Heydt, R. (2010). Analysis of the context integration mechanisms underlying figure–ground organization in the visual cortex. J. Neurosci. 30: 6482–6496

Zipser, K., Lamme, V. A. & Schiller, P. H. (1996). Contextual modulation in primary visual cortex. Journal of Neuroscience 16: 7376–7389.

Notes

[1] Traditionally, the ventral stream is viewed as the vision-for-perception pathway while the dorsal stream is viewed as the vision-for-action pathway (see Milner and Goodale, 2006). While the ventral stream subserves recognition and discrimination of shapes and objects, the dorsal stream subserves visually guided action, e.g., reaching and grasping based on the moment-to-moment analysis of spatial location, shape, and orientation of objects (see Milner and Goodale, 2006; Hebart and Hesselmaan, 2012). Taken together, the ventral-dorsal visual regions (taken together) are associated with the visual-motor system. Contrary to this traditional view, Gallese (2007) discusses various studies illustrating that motor knowledge is employed to solve perceptual tasks and argues that the dorsal stream is not only responsible for the unconscious control of action but also for the conscious awareness of space and action. In addition, the findings by Sakuraba et al. (2012) are hard to reconcile with the idea that the dorsal stream processes information in the absence of perceptual awareness and, hence, without input from high-level visual areas in the ventral stream (see also Sterzer et al., 2008).

[2] Some hierarchical models suggest that visual perception begins with the analysis of simple features such as orientation and culminating with complex aspects of the visual scene such as shapes and objects (see Marr, 1982; Riesenhuber and Poggio, 1999).

[3] In other words, top-down signals can impart different meanings about the same visual scene based on the behavioral context (Frith and Dolan, 1997; Gilbert and Li, 2013; Purves and Lotto, 2011; Dayan et al., 1995; Pollen, 1999; Lee et al., 1998; Rao et al., 1999; O’Connor et al., 2002; McAlonan et al., 2008). For example, contextual influences (that is, the ways in which the perceptual qualities of a local feature are affected by surrounding scene elements and the way in which characteristics of the global scene affect neuron responses to local features) play an important role in perceptual grouping, perceptual constancies, contour integration, surface segmentation, and shape recognition.

[4] This view is often called constructivism (see Gregory, 1970, 2001, and 2009; Frith and Dolan, 1997; Palmer, 1999; Mamassian et al., 2002; Rescola, 2013).

[5] Interestingly, the relevant memory representations are activated when they touch familiar objects possibly because their tactile perceptual system exhibits the sort of top-down modulation the visual system of normal subjects exhibits in relation to visual perception.

[6] The central tenet of Marr’s hierarchical model is that visual processing involves low (primal sketch), intermediate (2.5-D sketch), and high (3-D model representation) levels, roughly corresponding to different areas of the visual cortex. Accordingly, the function of the primal sketch is to take raw intensity values present in the retinal image and makes explicit information required to detect surfaces; the function of 2.5-D sketch is to makes explicit the orientation and approximate depth of surfaces in a viewer-centered frame of reference—it is viewer-centered in the sense that the emerging image is not yet linked to the external environment but it is organized with reference only to the viewer; and the function of the 3-D model representation is to make explicit shapes and their spatial organization in an object-centered frame of reference as seen by the perceiver—it is object-centered in the sense that the emerging image is independent of particular positions and orientations of the retina. It is only at this high-level of computational processing that the viewer attains a representation of the distal world.

[7] Raftopoulos (2011) uses the term “late vision” to refer to the conceptually modulated stage of visual processing starting at 150-200 ms post-stimulus onset, which, unlike early vision, he considers it to be cognitively penetrable.

[8] Marr offered two reasons for this claim: that visual ‘forms’ can be extracted from the image by using knowledge-free techniques, and that the top-down flowing of information does not affect the computation of the primal sketch.

[9] Raftopoulos allows that late vision, which takes place after the first 120 ms post-stimulus onset, is cognitively penetrable. However, since late vision involves post-perceptual processes, the fact that it is cognitively penetrable does not threaten the CIT (Raftopoulos, 2011).

[10] More recently, it has also been formulated as a causal thesis, according to which cognitive states cannot cause chances in the content or phenomenal character of visual experience (Siegel, 2005, 2012; Stokes, 2013). For example, your belief that the ripe banana in front of you is green cannot cause you to experience it as green. I discuss one of these theses in what follows.

[12] Chromatic variations arise from surfaces such as flowers or painted objects while pure or near-pure luminance variations arise mainly from inhomogeneous illumination such as shadows or shading.

[13] The term ‘colour memory’ refers to a back-end effect in relation to successive colour constancy; it refers to the process used to account for changes in illumination. Specifically, the visual system records the spectral properties of a scene in memory. These mental representations can be activated at a later time to recognize object. I discuss these back-end effects in relation to successive colour constancy below.

[14] Colour constancy was initially attributed to adaptation mechanisms. It was thought that the visual system adjusts its sensitivity to the light according to the context in which the light appears (see Burnhan et al., 1957; Jameson and Hurvich, 1989; Webster and Mollon, 1995). However, recent studies attribute colour constancy to local and global contrast mechanisms (see Kraft and Brainard, 1999; Heywood et al., 2001; Heywood and Kentridge, 2003).

[15] Studies show that although simultaneous colour constancy is limited and variable, successive colour constancy is fast and reliable (Foster and Nascimento,1994).

[16] It is also possible that successive colour contrast mechanisms accounted for these differences between characteristically red figures and the controls. The fact that the scene was lit using a fluorescent light (which has a greenish tint, at least in photographs in the absence of colour correction) may have contributed to the error since it may have had a different effect on the coloured light that was used to generate the background than it had on the surface colour of the figure, thereby creating an ambiguity similar to that causing the public controversy over the blue-black dress. I am grateful to Bob Kentridge for suggesting this possibility.

1. Intro

Dimitria Gatzia’s paper provides helpful clarifications to debates about whether perception is cognitively impenetrable. Gatzia argues that the effects of information stored in high-level perceptual systems (call it “high-level info”) on perceptual processing must be distinguished from effects of cognitive states on perceptual processing. She further argues that the latter, but not the former, count as cognitive penetration. I think this is correct, and is important to keep in mind when searching for evidence of cognitive penetration.

A crucial question going forward is: What distinguishes high-level info from cognitive states? Gatzia writes that cognitive penetration “involves the perceiver’s cognitive states (as opposed to high-level information processing systems of the visual system)” [emphasis in original]. She also contrasts intra-perceptual information with “cognitive states of perceivers (i.e., their beliefs, desires, intentions, which are consciously accessible to the them [sic]).” Passages like these seem to suggest that cognitive penetration involves effects on perception by states that are (i) consciously accessible and (ii) personal-level (rather than subpersonal), while (iii) high-level info is neither consciously accessible nor personal-level, and thus its effects on perception do not count as cognitive penetration. In that case, determining whether the effect of a state is cognitive penetration requires determining whether the state is consciously accessible or personal-level.

I don’t mean to attribute this view to Gatzia. She might not in fact endorse (i)-(iii), or she might endorse them but deny that they account for the difference between high-level info and cognitive states. However, it may be helpful to consider these claims in their own right in the hopes of finding a good metric for distinguishing cognitive states from high-level info. Doing so might help with Gatzia’s project of characterizing cognitive penetration, and with larger projects of mapping out the architecture of the mind.

2. Consciousness

First, whether the state can become conscious is not a promising metric for at least two reasons.

(a) While it may be correct that high-level info is (at least typically) not accessible to consciousness, it’s not clear that this fact plays a role in explaining why top-down effects of high-level info fail to constitute cognitive penetration.

Suppose Phil is a mental duplicate of Lil, except while Lil’s high-level info is unconscious, Phil’s is conscious. We can stipulate that Phil and Lil’s high-level info is alike in all other relevant respects—i.e., both are encapsulated, have the same content, the same representational format, are accessed by the visual system in the same way, and cannot be used directly for cognitive processes (excluding consciousness, if consciousness is cognitive). If a state’s accessibility to consciousness were sufficient for its influence on perception to count as cognitive penetration, then the perceptual states delivered by Phil’s visual system would be the result of cognitive penetration while those delivered by Lil’s visual system would not. But both intuitively and from the perspective of mental architecture, both cases seem to be on a par; there doesn’t seem to be anything fundamentally different about the nature of the processes, such that one should and the other shouldn’t count as cognitive penetration. The fact that a state is conscious, therefore, doesn’t seem to mandate that its influence on perception counts as cognitive penetration.

Perhaps, in normal human minds, when a state is conscious it also plays other cognitive roles, and so perhaps knowing that a state is conscious gives us some reason to think that it is cognitive. But in that case, what makes the state cognitive and what makes its influence on perception count as cognitive penetration is not consciousness itself, but rather some other aspect of mental architecture that correlates with consciousness. In that case, conscious accessibility can provide evidence that a state is cognitive, but is not part of what makes it the case that it is cognitive.

One major caveat: Views like Global Workspace Theory (Baars 1988; Dehaene & Naccache 2001) identify consciousness with certain kinds of cognitive functioning, which might make the above thought experiment incoherent since it stipulates that Phil’s conscious high-level info is functionally equivalent to Lil’s unconscious high-level info. But in that case, consciousness is only a good metric for distinguishing high-level info from cognitive state if we assume certain tendentious theories of consciousness.

(b) Using accessibility to consciousness as a metric for deciding whether a state can cognitively penetrate perception seems to rule out the idea that there are consciously inaccessible but personal-level cognitive states. Take implicit bias. Implicit biases are cognitive states that drive behavior and are arguably attributable to people rather than mere subsystems of people. For instance, if a person crosses the street to avoid someone of a different race because of an implicit bias, that behavior should arguably be explained because of that person’s biases, even if they take the form of consciously inaccessible implicit attitudes. Implicit biases also arguably exhibit the same sort of propositional structure as other paradigmatically cognitive propositional attitudes (Mandelbaum 2016). If an implicit bias directly affects perceptual processing—for example, if it causes someone to perceive an African-American face with a neutral expression as angry—that seems to be a case of cognitive penetration. This seems true even if implicit biases are inaccessible to consciousness (which is, however, a matter of controversy—see, e.g., Hahn et al. 2014).

All of these claims about implicit bias are controversial, to be sure. But we may not want to draw an architectural border between high-level info and information stored in cognition in a way that assumes implicit bias is consciously accessible, or that inaccessible attitudes are never personal-level cognitive states.

Furthermore, repressed beliefs and desires (if they exist) provide another example of cognitive states that are arguably personal-level but not consciously accessible. If someone’s repressed beliefs or desires alter their perceptual processing, that seems to count as cognitive penetration even though the states are not accessible to consciousness.

If these points are correct, then a state’s being consciously accessible is neither necessary nor sufficient for its influence on perception to constitute cognitive penetration.

3. Personal/subpersonal

Second, whether the state is personal-level is not a promising metric either.

To use a toy example, suppose (1) that there is a cheater-detection module (e.g., Cosmides et al. 2010), (2) that it houses subpersonal token propositional states in an information store it uses for typical computations, and (3) that they are accessed by the perceptual system, under rare conditions, in a way that changes the content of the percept in a semantically coherent way. (NB: (3) doesn’t count as a violation of the encapsulation of the cheater-detection module, since it only stipulates that the states are accessed by perception, not that they’re modified by perception.) Why would this not count as a case of cognitive penetration? It strikes me that it would, since information stored in central cognition is being accessed by perceptual systems in a way that alters the content of the percept in a semantically coherent way. Or consider implicit bias again. Even if implicit biases should be understood as subpersonal in addition to being inaccessible to consciousness, it still would seem that any direct effect they have on perceptual processing should count as cognitive penetration.

4. Conclusion

It seems that what matters in deciding whether a state’s influence on perception constitutes cognitive penetration is not whether the state is consciously accessible or personal-level, but rather whether it is a genuinely cognitive, non-perceptual state. The division between high-level info and cognitive states might, therefore, be drawn by appeal to informational encapsulation, representational format (though see Quilty-Dunn 2016), use in proprietarily perceptual algorithms, or some other metric. Gatzia’s paper makes it clear why this division is crucial for understanding cognitive penetration and mental architecture more generally.

References

Baars, B.J. (1988). A Cognitive Theory of Consciousness. New York: Cambridge University Press.

Cosmides, L., Barrett, H. C., & Tooby, J. (2010). Adaptive specializations, social exchange, and the evolution of human intelligence. PNAS 107(2), 9007–9014.

Dehaene, S. & Naccache, L. (2001). Towards a cognitive neuroscience of consciousness: Basic evidence and a workspace framework. Cognition 79, 1–37.

Hahn, A., Judd, C.M., Hirsh, H.K., & Blair, I.V. (2014). Awareness of implicit attitudes. Journal of Experimental Psychology: General 143(3), 1369–1392.

Mandelbaum, E. (2016). Attitude, inference, association: On the propositional structure of implicit bias. Noûs 50(3), 629–658.

Quilty-Dunn, J. (2016). Iconicity and the format of perception. Journal of Consciousness Studies 23(3-4), 255–263.